There are so many services for formatting sources…why use an LLM for this?

Because many people still don’t understand that AI isn’t a reliable source of info. I have seen people use it to fact-check things in arguments before, as if it would actually give them a factual answer.

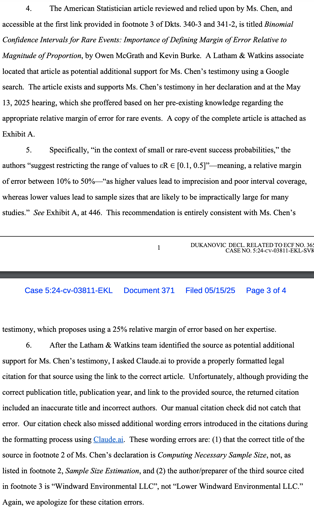

This is far worse than being not a reliable source of info. Ms Chen had all the info she needed, and Claude falsified it.

This doesn’t appear as bad as some of the other ai legal stuff. Formatting references isn’t really about generating content as much as structuring it and AI (usually) doesn’t have the kind of problems with hallucinations when just tasked with reorganizing data. I’ve used GPT for reformatting references to APA style and it worked really well. I’m surprised Claude couldn’t handle this task.

Also bummed that there doesn’t appear to be a book called a statisticians guide to making inferences with noisy data, because that sounds like a book worth checking out.

They gave it a link to the paper, not the text of the paper. So it probably couldn’t actually access the URL and just pulled from its training.

This should be cause for contempt. This isn’t much worse, IMO, than a legal briefing mentioning, “as affirmed in the case of Pee-pee v.s Poo-poo.” They’re basically taking a shit on the process by not verifying their arguments.

My best guess is that the judge was more annoyed by the fact that the briefs were all printed out on a shitty black and white laser printer, and the watermark was just wasting toner and making it harder to read without glasses. It could also be a complaint about the file size, because watermarking every page means you’re sending that image on every single page of the pdf. No reason to turn a 150KB text file into a 30MB file, especially when the latter is too large to attach to an email.

There are also some judges that just have a stick up their ass about respecting the sanctity of the court. But there are valid arguments besides “respect mah authoritah”.

Being a model citizen and a person of taste, you probably don’t need this reminder, but some others do: Federal judges do not like it when lawyers electronically watermark every page of their legal PDFs with a gigantic image—purchased for $20 online—of a purple dragon wearing a suit and tie. Not even if your firm’s name is “Dragon Lawyers.”

Lmao

Lmao one of the pages on their website lists a few events at 123 Legal Ave, Suite 100, City, State, 12345. I’m starting to get the feeling these people don’t take their job very seriously.

The “divorce” link just leads to “divorce”. Not “https://shittysite/divorce“. Just,” divorce".

We really are just getting stupider and stupider, aren’t we?

Most are, yes…why learn anything when companies will speak for you?

How is this not considered fraud? Or at least hold them in contempt.

AI’s second innovation, besides letting you mass fire labor, is removing all blame for any decision as long as you can thinly point to AI being involved.

It outsources responsibility, and our legal/political/moral systems are not built to handle it.

But it legally doesn’t. That is why AI has not taken over in high liability fields. Morons are testing the waters and learning that AI mistakes make no difference in a court room, and if anything are grounds for further evidence of negligence.

The big bet now, I think, is whether those popup insurance policies regarding coverage for losses relates to AI usage end up profitable. If so, that is what will lead to truly dystopian stories like “AI piloted passenger jet crashes, United Airlines fined x million dollars but happily continues using AI pilots because insurance covered the fine and it’s just a cost of doing business”

How are so many professional people so ignorant about AI?

Because they’re professionals in unrelated fields. Understanding AI was never part of their job description, this strange and confusing technology snuck up on everyone and most people don’t really know what’s going on, they were never ready for this.

Can we normalize not calling them hallucinations? They’re not hallucinations. They are fabrications; lies. We should not be romanticizing a robot lying to us.

Pretty engrained vocabulary at this point. Lies implies intent. I would have preferred “errors”

Also, for the record, this is the most dystopian headline I’ve come across to date.

If a human does not know an answer to a question, yet they make some shit up instead of saying “I don’t know”, what would you call that?

If you train a parrot to say “I can do calculus!” and then you ask it if it can do calculus, it’ll say “I can do calculus!”. It can’t actually do calculus, so would you say the parrot is lying?

that’s a lie. They knowingly made something up. The AI doesn’t know what it’s saying so it’s not lying. “Hallucinating” isn’t a perfect word but it’s much more accurate than “lying.”

“Fabrications” seems ok to me

Bullshit.

This is what I’ve been calling it. Not as a pejorative term, just descriptive. It has no concept of truth or not-truth, it just tells good-sounding stories. It’s just bullshitting. It’s a bullshit engine.

I like fabrication going forward. Clearly made up, doesn’t imply intent

The word hallucination has zero implication of intent whatsoever. Last time I checked hallucination is an entirely involuntary experience, regardless of the context the word is used in.

They are called hallucination in computer science not “to romanticize” it. It is called that because the output is totally random from the perspective of the input. If there is no logical path from input to the output, it is similar to a human hallucinating. Human sees no actual weird visual stimuli that results in them hallucinating a dragon, therefore the input info from their eyes has no bearing on what they imagine is actually there.

This is different from “fabrication” in that the AI intentionally creating fake info based on your input request would not be a hallucination, because there would be a relationship between input and output.

While you say you prefer “fabrication”, the word fabrication actually implies some intent that is absent from what we are referring to as AI hallucinations

I meant that fabrication doesn’t imply intent as “lies” would.

It seems like you use the hallucinations term correctly, when output has no relation to input.

In this case, as in many numerous others, the Ai took input of “cite a source” and did as output cite a source as requested, but invented the content of the source. It fabricated, which means to make up, create.

Fabricate does not imply intent to deceive, where lie does.

I will agree that if the output is purely unrelated to the input, hallucination is still fine, but is absolutely a romanticized term when we’re referring to this computer generated code… It’s literally personification.

Everything an LLM outputs is hallucinated. That’s how it works. Sometimes the hallucination matches reality, sometimes it doesn’t.

Emm no… Why?

Vibe litigation

The AI ate my homework.